A key challenge for many medium to large businesses is the management of multiple database server instances across the organization. SQL Server has always had a pretty good story with regards to multi-server management through automated multi-server jobs, event forwarding, and the ability to manage multiple instances from a single administrative console. In SQL Server 2008, Microsoft introduced a new solution called Data Collector for gathering key server performance data and centralizing it in a management data warehouse; and in SQL Server 2008 R2, this technology underpins a new way to proactively manage server resources across the enterprise.

With SQL Server 2008 R2, database administrators can define a central utility control point (UCP) and then enroll SQL Server instances from across the organization to create a single, central dashboard view of server resource utilization based on policy settings that determine whether a particular resource is being over, under, or well utilized. So for example, a database administrator in an organization with multiple database servers can see at a glance whether or not overall storage and CPU resources across the entire organization are being utilized appropriately, and can drill-down into specific SQL Server instances where over or under utilization is occurring to identify where more resources are required (or where there is spare capacity).

Sounds pretty powerful, right? So you’d expect it to be complicated to set up and configure. However, as I hope to show in this article, it’s actually pretty straightforward. In SQL Server Management Studio, there’s a new tab named Utility Explorer, and a Getting Started window that includes shortcuts to wizards that you can use to set up a UCP and enroll additional server instances.

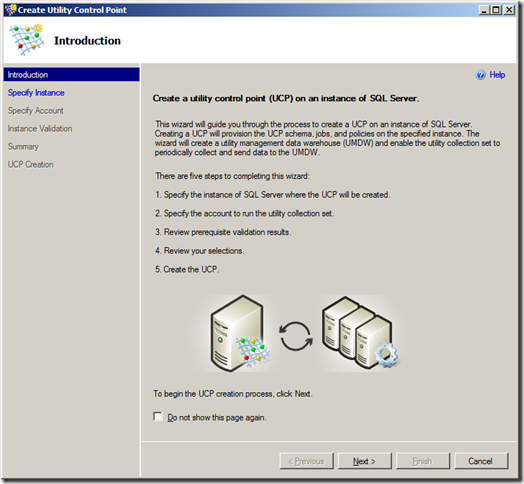

Clicking the Create a Utility Control Point link starts the following wizard:

The first step is to specify the SQL Server instance that you want to designate as a UCP. This server instance will host the central system management data warehouse where the resource utilization and health data will be stored.

Next you need to specify the account that will be used to run the data collection process. This must be a domain account rather than a built-in system account (you can specify the account that the SQL Server Agent runs as, but again this must be a domain account).

Now the wizard runs a number of verification checks as shown here:

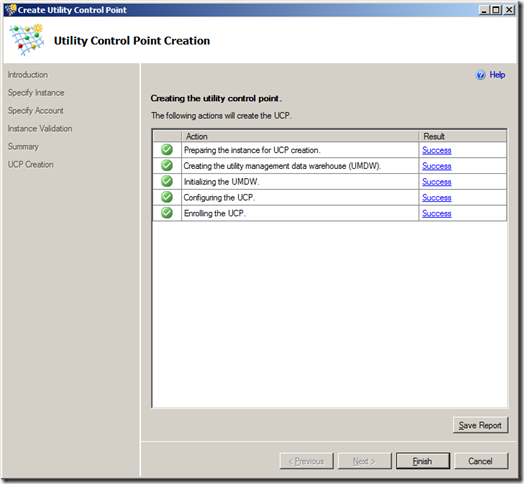

Assuming all of the verification checks succeed, you’re now ready to create the UCP.

The wizard finally performs the tasks that are required to set up the UCP and create the management data warehouse.

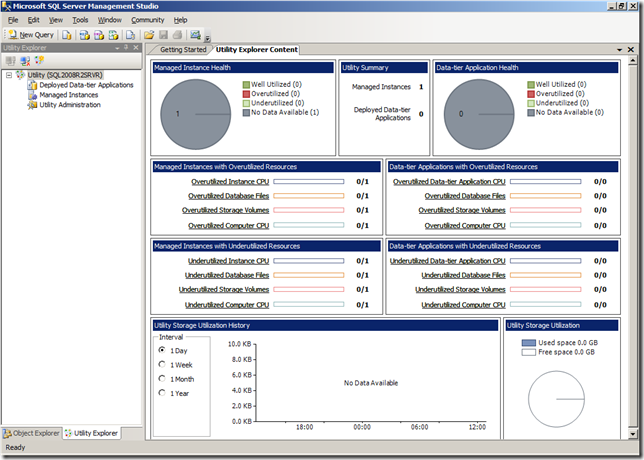

After you’ve created the UCP, you can view the Utility Control Content window to see the overall health of all enrolled SQL Server instances. At this point, the only enrolled instance is the UCP instance itself, and unless you’ve waited for a considerable amount of time, there will be no data available. However, you can at least see the dashboard view and note that it shows the resource utilization levels for all managed instances and data-tier applications (another new concept in SQL Server 2008 R2 – think of them as the unit of deployment for a database application, including the database itself plus any server-level resources, such as logins, that it depends on).

To enroll a SQL Server instance, you can go back to the Getting Started window and click Enroll Instances of SQL Server with a UCP. This starts the following wizard:

As before, the first step is the specify the instance you want to enroll. I’ve enrolled a named instance on the same physical server (actually, it’s a virtual server but that’s not really important!), but you can of course enroll any instance of SQL Server 2008 R2 in your organization (It’s quite likely that other versions of SQL Server will be supported in the final release, but in the November CTP only SQL Server 2008 R2 is supported).

As before, the wizard performs a number of validation checks.

Then you’re ready to enroll the instance.

The wizard performs the necessary tasks, including setting up the collection set on the target instance.

When you’ve enrolled all of the instances you want to manage, you can view the overall database server resource health from a single dashboard.

In this case, I have enrolled two server instances (the UCP itself plus one other instance) and I’ve deliberately filled a test database. Additionally, the virtual machine on which I installed these instances has a small amount of available disk space. As a result, you can see that there is some over-utilization of database files and storage volumes in my “datacenter”. To troubleshoot this overutilization, and find the source of the problem, I can click the Managed Instances node in the Utility Explorer window and select any instances that show over (or under) utilization to get a more detailed view.

Of course, your definition of “over” or “under” utilized might differ from mine (or Microsoft’s!), you can configure the thresholds for the policies that are used to monitor resource utilization , along with how often the data is sampled and how many policy violations must occur in a specified period before the resource is reported as over/under utilized.

These policy settings are global, and therefore apply to all managed instances. You can set individual policy settings to override the global polices for specific instances, though that does add to the administrative workload and should probably be considered the exception rather than the rule.

My experiment with utility control point-based multi-server management was conducted with the November community technology preview (CTP), and I did encounter the odd problem with collector sets failing to upload data. However, assuming these kinks are ironed out in the final release (or were caused by some basic configuration error of my own!), this looks to be the natural evolution of the data collector that was introduced in SQL Server 2008, and should ease the administrative workload for many database administrators.