“Denali” is the code-name for the next release of Microsoft SQL Server, and a community technology preview (CTP) is available for download from here. My colleague Geoff Allix has already posted a couple of articles about the enhancements Denali includes for debugging Transact-SQL scripts here and here, and as the Content Master data platform team continues to investigate the CTP, I’m sure more posts will appear. In this post, I want to discuss a new feature that makes it easier to delegate server-level administrative tasks – user-defined server roles.

If you’re familiar with previous releases of SQL Server, you’ll know that there are essentially two levels of security principal within SQL Server (well alright, 3 if you include the operating system) – server-level principals, such as logins, and database-level principals, such as users. Permissions can be granted to these principals in order to allow them to use or manage resources (generally known as securables) at the relevant level. For example, you can grant permissions on server-level securables (such as endpoints and certificates) to server-level principals, and you can grant permissions on database-level securables such as (tables and views) to database-level principals. Obviously, managing permissions for individual principals can become complex (and error-prone) as the number of principals increases, so in common with most software systems, SQL Server supports the idea of grouping principals into roles, enabling you to grant the required permissions to the role, and simply add or remove principals from the role in order to allow or disallow them access to the securables.

So far, so ordinary.

Previous releases of SQL Server included a pre-defined set of server-level roles and database-levels roles that are already granted commonly required permissions, and to which you can simply add your principals (for example, logins at the server level or users at the database-level) in order to quickly enable people to access the resources they need while maintaining the principle of “least privilege” (i.e. not granting any permissions to anyone who doesn’t require them). Additionally, you can create your own user-defined database-level roles but crucially, until SQL Server “Denali” you could not create your own user-defined server-level roles.

To understand how the ability to create and manage your own server-level roles is useful, let’s consider a scenario where a corporation uses a SQL Server instance to host multiple application databases. Many of these databases are used by internal “home grown” ASP.NET Web applications or client/server applications that use Windows integrated authentication, and to control access to these databases, the DBA has simply created logins in SQL Server for the appropriate Windows Active Directory groups. However, the environment also includes a couple of off-the-shelf applications that do not support Windows-integrated authentication, and therefore require their own SQL Server logins. Let’s also suppose that these applications are supported by team of dedicated application administrators who need to be able to manage the SQL Server logins for the applications, for example to periodically change the password.

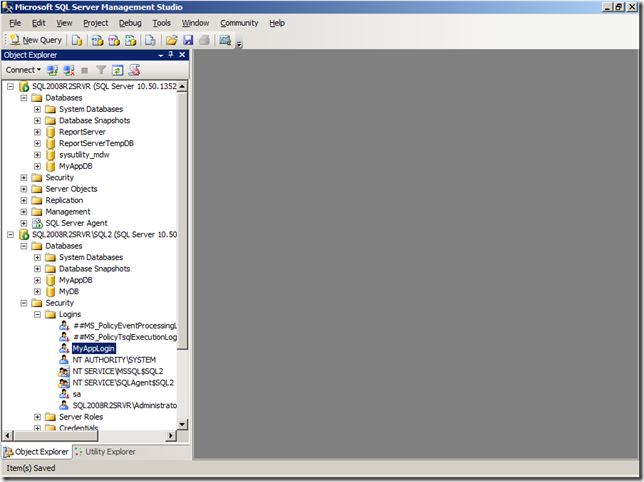

To accomplish this, I can create a user-defined server role by right-clicking the Server Roles folder in SQL Server Management Studio and clicking New Server Role, as shown below. Alternatively, I can use the new CREATE SERVER ROLE Transact-SQL statement.

Using the SQL Server Management Studio UI reveals the New Server Role dialog box, enabling me to define the server role. In this case, I want to create a role named SQLAccountsAdmin, which will be owned by the built-in sa login. I can also specify the server-level securables I want to assign permissions for, and I can select each securable and set the required permissions. In this case, I’ve selected the AcctsPackage and AppSvcAccount logins (yes, principals can also be securables!) and granted the full set of available permissions on these logins to the SQLAccountsAdmin role.

To grant permissions to a user-defined server role by using Transact-SQL, you can use the GRANT, DENY, and REVOKE Transact-SQL commands just like you would for any other server-level principal.

Now I need to add some server-level principals to the role, so that they can use their role membership to gain the permissions required to manage the two SQL Server logins. You can do this on the Members tab of the dialog box or by using the ALTER SERVER ROLE Transact-SQL statement.

Finally, it’s worth noting that you can nest user-defined server roles within other server-level principals, including the fixed server roles provided out-of-the-box by SQL Server. In general, I’d advise against this as you can often find yourself granting unnecessary and unintended permissions, but it’s shown here for completeness.

So, there you have it – user-defined server roles in SQL Server “Denali” provide a flexible way to delegate administrative tasks at the server-level.